Knowledge Graphs, Language Models, and Knowledge Representation

9 March, 2026 | Carringtone Kinyanjui

Schema Light, Ontology Free Knowledge Graphs

TL;DR

- Turning long, messy legal text into usable structured knowledge is still brittle and ontology-heavy classic name entity recognition and relation extraction often misses what actually matters in a case and doesn’t scale cleanly across domains and languages.

- We use attention rollout to identify salient entities and their token-to-token influence, then convert those signals into a weighted influence network where graph centrality helps select candidate nodes and relations; optionally letting an LLM propose human-readable relation labels.

- On an initial small sample, the approach produces a graph that surfaces key actors and suggests relations, pointing toward a path to schema-light / ontology-free KG construction that we plan to scale to the full corpus.

Karibu!

This will be our new research blog, summarising the things we’re interested in, working on and yes, abandoning.

A significant fraction of our research and development is dedicated to the problem of structuring knowledge for use in large language models (LLMs) and other forms of artificial intelligence. We believe that long term capabilities of AI will be downstream of our ability to develop expansive, multimodal knowledge representation systems. We are investigating multiple schemes for ingesting, injecting and retrieving knowledge, ranging from traditional scaffolding approaches such as retrieval-augmented generation (RAG) to more explicitly structured representations based on knowledge graphs[1]. Across the field, structured knowledge retrieval has become a central concern, reflecting growing recognition that unstructured text alone is an insufficient substrate for robust reasoning.

Building on earlier knowledge-graph designs, most notably those associated with the semantic web, a major technological development has been the synthesis of retrieval-augmented generation with graph-based representations. These so-called Graph-RAG architectures aim to combine the precision of symbolic knowledge representation with the broad semantic coverage enabled by similarity search in high-dimensional embedding spaces[2].

There are several ways to achieve this synthesis. A common approach embeds selected features of a knowledge graph into a vectorisation scheme and performs semantic similarity search over graph nodes, allowing controlled jumps to neighbouring nodes, or “hops”, to incorporate contextual structure. One example of this approach is Spanner Graph, developed by Google[3]. Alternative schemes avoid explicit embeddings altogether, instead relying on an LLM to perform structured search over a predefined knowledge graph. Collectively, these approaches fall under a broader research program often described as knowledge graphs for LLMs[4].

A complementary, and in some sense reverse, research direction can be described as LLMs for

knowledge graphs[5]. Here, the representational and generative capacities of language models are used to construct, refine, and extend graph-structured knowledge. Because LLMs encode rich models of linguistic and semantic structure, they can be used to specify ontologies, prune or grow graphs, and extract compressed representations of knowledge from raw textual data. Most uses of LLMs

At Haki Research, we have developed an in-house approach that exploits the attention

mechanisms of modern LLMs to extract knowledge graphs from unstructured text using

schema-free methods. This approach is motivated by three working hypotheses:

- LLMs possess sufficiently rich internal representations [6] that can be mapped to arbitrary chunks of text observed at inference time.

- These representations, when combined with attention scores [7], can be used to generate saliency measures for entities appearing in the text.

- Saliency scores are related to graph-theoretic notions of centrality for the corresponding entities[8].

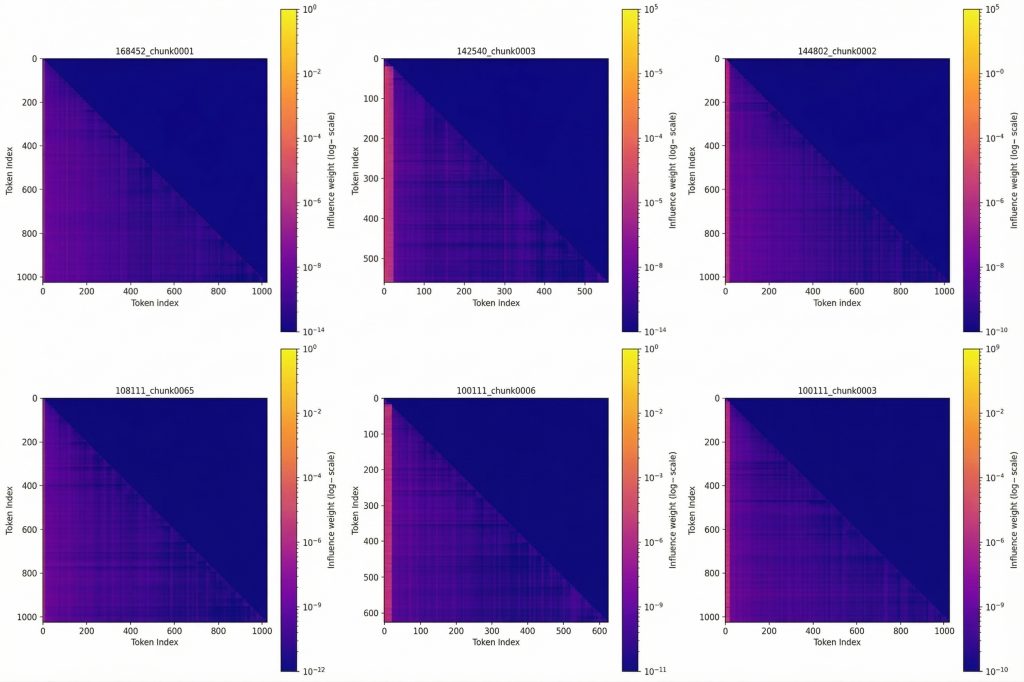

Methodologically, we make use of attention rollout techniques from mechanistic interpretability[9]. We developed our pipeline using data obtained from the National Council of Law Reporting, a repository of 250,000 legal case documentation files in Kenya. For this pipeline, we only worked with 15 documents. Chunks of text consisting of a few hundred tokens each are then piped to the language model. Attention rollout allows us to compute attention scores for all tokens within a given text chunk, aggregating attention across layers successively to derive mutual influence scores. This can be summarised in an influence matrix shown below.

Figure 1. Attention-rollout influence matrices (token-token mutual influence) for selected document chunks; brighter regions indicate stronger mutual influence on a log scale.

This will give us a measure of how strongly one word influences another and vice versa. From these mutual influences, we derive saliency scores that quantify how important a given token or entity is relative to others in the same context. These saliency scores can then be promoted to centrality measures by explicitly reading out relationships between entities. The argument concisely can be given as:

“Rather than a sequence of words, a chunk of text is better modelled as a network with dominant words more central to the network ”

There is some support for this relational paradigm of transformers [10] and the utility of relationalism in general [11].

Once the saliency scores are determined by the attention mechanism, we can now build these structures into triples of subjects, objects and relationships. These can then be bound by common factors (likely these important nodes) to grow a network. This will constitute our knowledge graph.

Results

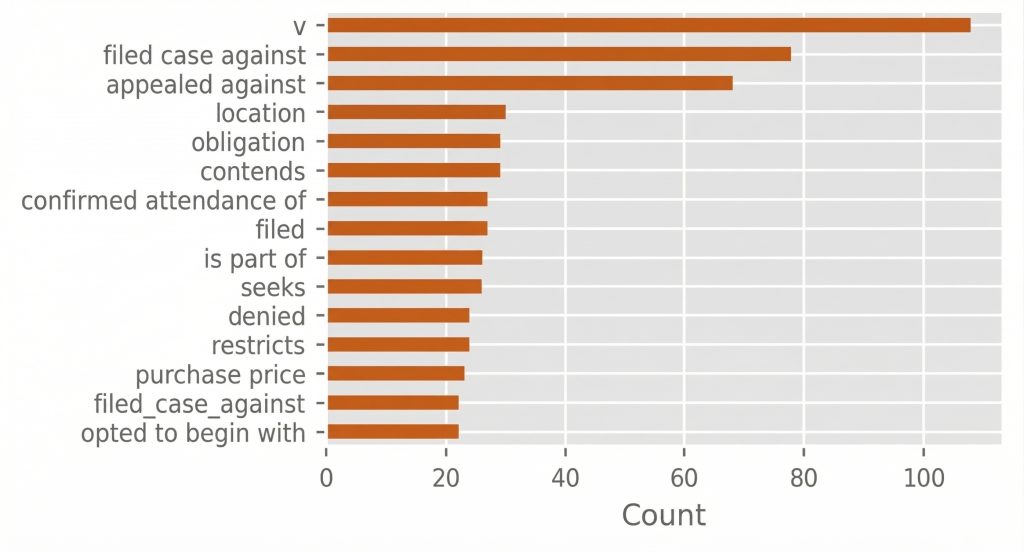

We begin by first inspecting the types of relationships extracted from the nodes with the aid of an LLM (GPT-4). The most important relationships extracted are associated with legal proceedings showing that both the attention mechanism is able to extract meaningful relationships between salient nodes. It should be noted that we specified no ontology or schema to help us find important relationships in the documents churned, we trusted relationalism.

Figure 2. Most frequent extracted relation phrases from the schema-free triple induction step (counts across processed chunks).

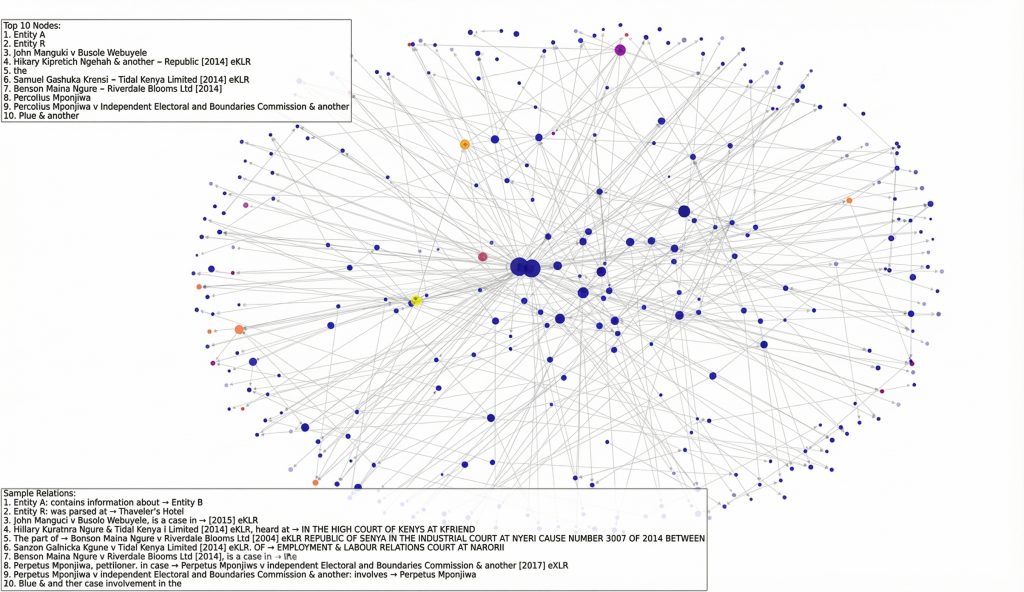

Next, it would be interesting to inspect the salient nodes in the network, which were extracted from attention rollout:

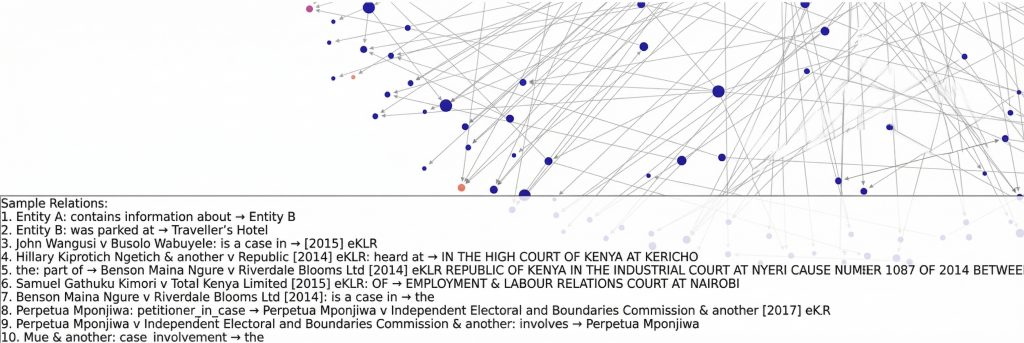

Figure 3. Salient-node network induced from attention rollout, with the most central nodes highlighted and representative extracted triples shown for qualitative inspection.

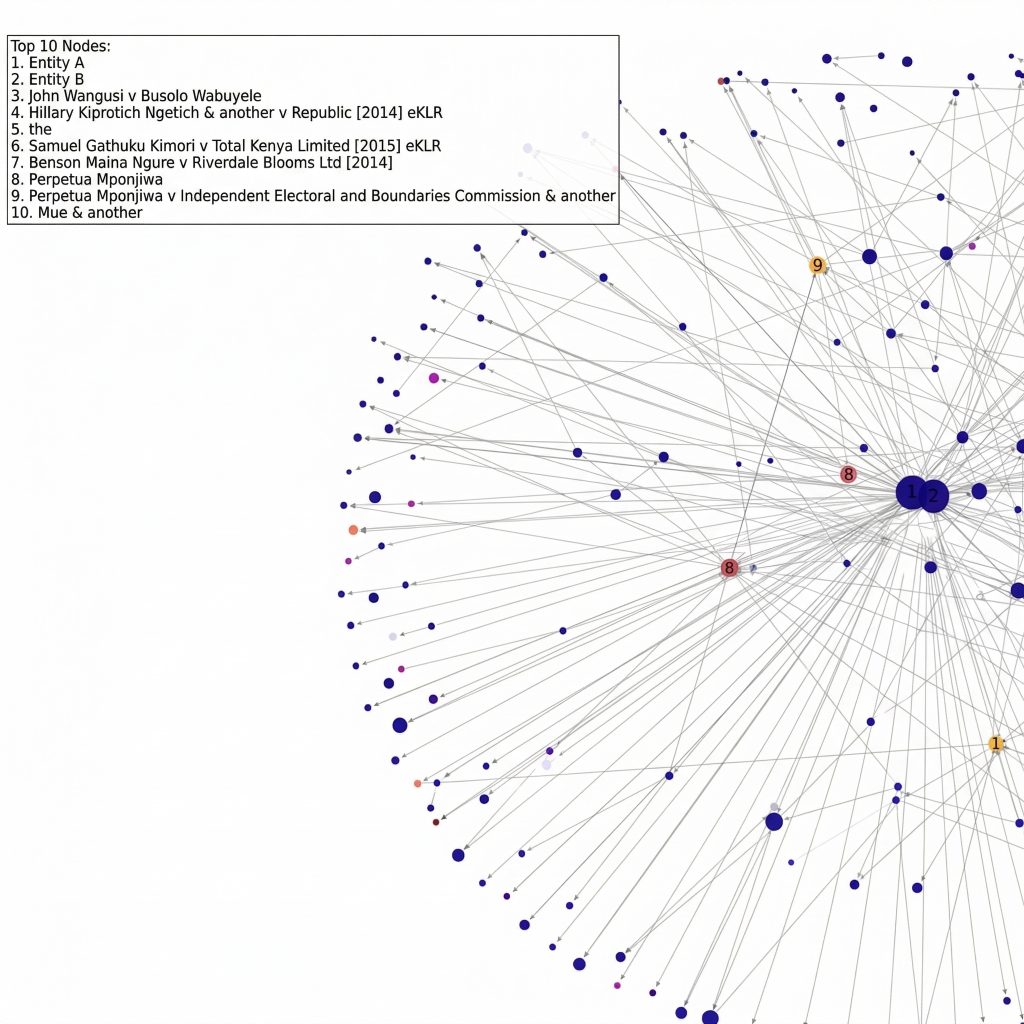

Figure 4. Zoomed view of the induced network showing the top-ranked nodes (centrality-based) within the broader graph structure.

At first sight it seems to extract interesting nodes. We have anomalous looking nodes like “the” (node number 5) and “Perpetua Mponjiwa” and “Perpetua Mponjiwa v Independent Electoral and Boundaries Commission & another” (8 & 9) which seem to be duplicated entries. However, upon closer inspection of the node relationships,

Figure 5. Example extracted triples (“Sample Relations”) illustrating the subject–relation–object forms produced by the pipeline, including case and party entities.

We see that from relations 8 and 9, that “Perpetua Mponjiwa” and “Perpetua Mponjiwa v Independent Electoral and Boundaries Commission & another” are actually two different nodes, with the first as a petitioner and the second as a case. The system recognises these as different entities.

Limitations

We have only tested this on legal data as we were severely limited in compute when we were carrying out the experiment. This was amplified by the fact that attention rollout calculation for the chunks is computationally intensive, taking a few minutes for every chunk. We also have a bit of noise to tune out of our initial knowledge graph. We want to resist any hardcoded cleaning methods here and are in search for more conceptually clean methods. We have a fully developed agentic system based on the data, which is already in deployment in Kenya. This is a baseline against which we are very interested in measuring.

Ongoing Work

We are currently scaling these methods to operate over our complete dataset of approximately

250,000 documents. While our initial applications have focused on legal text, we are actively

exploring the transfer of these techniques to domains unrelated to law. To support this work, we

have secured a compute budget of $6,000 from Google, alongside additional support from

MongoDB, to enable further development and experimentation with this technology. We’re also interested in more speculative methods which may allow us to inject full knowledge graphs into LLMs, which would demand a sophisticated understanding of knowledge representation.

References

- Wang, C., Liu, X., Yue, Y., Tang, X., Zhang, T., Jiayang, C., Yao, Y., Gao, W., Hu, X., Qi, Z. and Wang, Y., 2023. Survey on factuality in large language models: Knowledge, retrieval and domain-specificity. arXiv preprint arXiv:2310.07521.

- Edge, D., Trinh, H., Cheng, N., Bradley, J., Chao, A., Mody, A., Truitt, S., Metropolitansky, D., Ness, R.O. and Larson, J., 2024. From local to global: A graph rag approach to query-focused summarization. arXiv preprint arXiv:2404.16130.

- Google Cloud (2026) Spanner Graph overview. Google Cloud Documentation. Available at: (Spanner Graph overview | Google Cloud Documentation) (Accessed: 27 February 2026).

- Pan, S., Luo, L., Wang, Y., Chen, C., Wang, J. and Wu, X., 2024. Unifying large language models and knowledge graphs: A roadmap. IEEE Transactions on Knowledge and Data Engineering, 36(7), pp.3580-3599.

- Wang, X., Xiang, Y., Gui, L. and He, Y., 2025, July. PECAN: LLM-Guided Dynamic Progress Control with Attention-Guided Hierarchical Weighted Graph for Long-Document QA. In Findings of the Association for Computational Linguistics: ACL 2025 (pp. 13317-13335).

- Huh, M., Cheung, B., Wang, T. and Isola, P., 2024. The platonic representation hypothesis. arXiv preprint arXiv:2405.07987.

- Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł. and Polosukhin, I., 2017. Attention is all you need. Advances in neural information processing systems, 30. – (No for real bro, relationalism is all you need)

- West, D.B., 2001. Introduction to graph theory (Vol. 2, pp. 1-150). Upper Saddle River: Prentice hall.

- Chefer, H., Gur, S. and Wolf, L., 2021. Transformer interpretability beyond attention visualization. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 782-791).

- Joshi, C.K., 2025. Transformers are graph neural networks. arXiv preprint arXiv:2506.22084.

- Bain, R., Festa, A., Lee, G.Y. and Zhang, A., 2022. Wittgenstein’s philosophy of language: The philosophical origins of modern NLP thinking. ArXiv, pp.1-21.